Artificial intelligence in healthcare

A lethal autonomous weapon is a machine that locates, selects and engages human targets without human supervision. Widely available AI tools can be used by bad actors to develop inexpensive autonomous weapons and, if produced at scale, they are potentially weapons of mass destruction https://www.finextra.com/blogposting/27302/artificial-intelligence-ai-and-software-defined-radio-sdr. Even when used in conventional warfare, it is unlikely that they will be unable to reliably choose targets and could potentially kill an innocent person. In 2014, 30 nations (including China) supported a ban on autonomous weapons under the United Nations’ Convention on Certain Conventional Weapons, however the United States and others disagreed. By 2015, over fifty countries were reported to be researching battlefield robots.

Overall, AI systems work by leveraging data, algorithms, and computational power to learn from experience, make decisions, and perform tasks autonomously. The specific workings of an AI system depend on its architecture, algorithms, and the nature of the tasks it’s designed to accomplish.

The application of AI in medicine and medical research has the potential to increase patient care and quality of life. Through the lens of the Hippocratic Oath, medical professionals are ethically compelled to use AI, if applications can more accurately diagnose and treat patients.

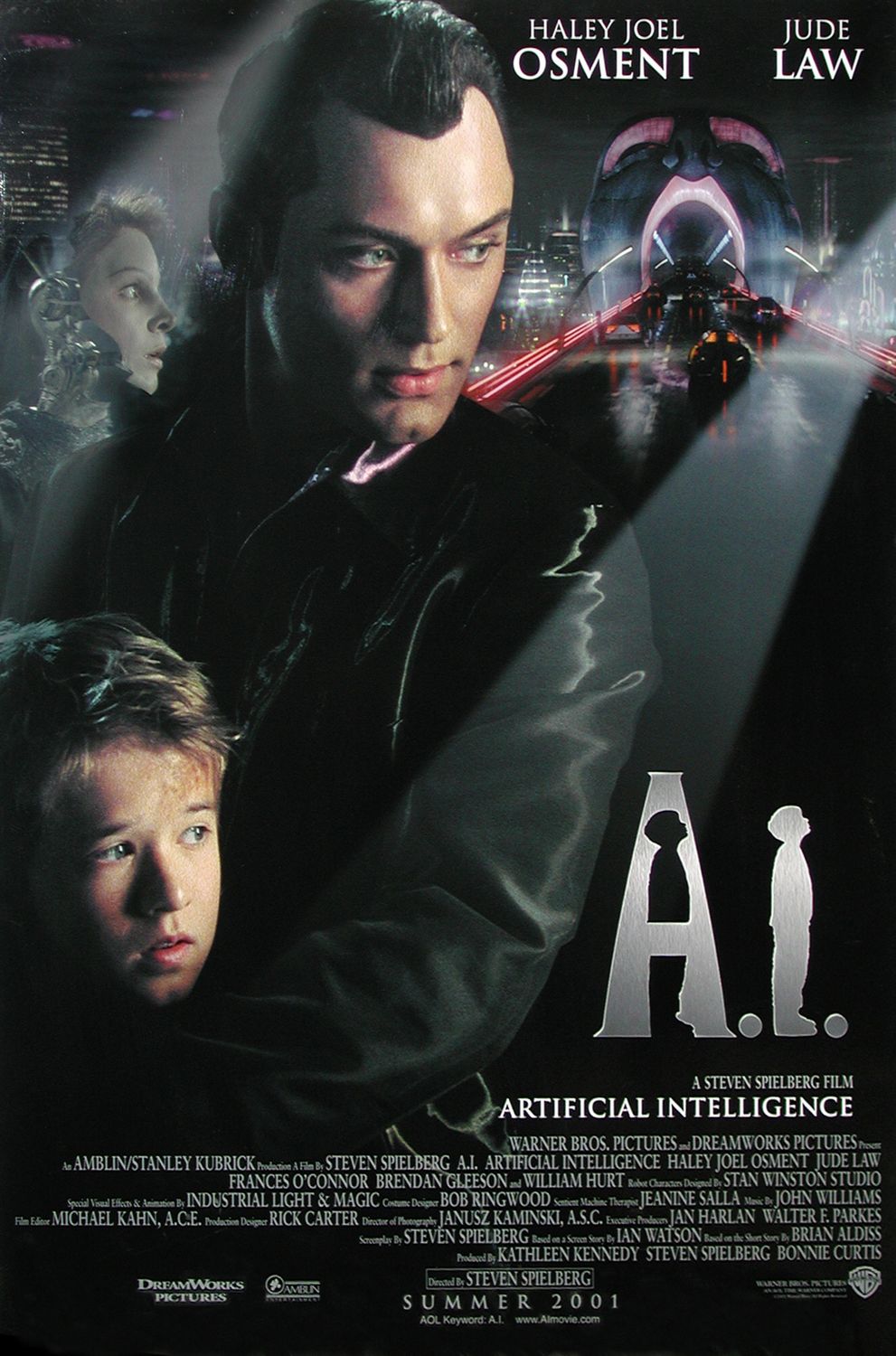

Artificial intelligence movie

Mother/Android shoves all kinds of horrors right at the audience member’s face. There are scenes where phones explode, killing the users, and other androids issue creepy messages such as wishing people Happy Halloween rather than Merry Christmas. Overall, it’s a sad tale that shows how mean machines can be if things get out of control.

Ok, so one action-packed blockbuster has crept onto this list! I, Robot centres on the importance of setting rules for AI to follow and the extent to which we can predict and control how those rules might be interpreted. Though it strays far from the Isaac Asimov short-story collection it is named after, there is plenty in here that can be related to current developments and discussions around building safeguards into AI systems.

Chappie’s single greatest accomplishment is the way it makes you feel for this CGI robot. Chappie learns as a child does, just quicker, but the young A.I. is extremely impressionable. The audience is made to feel like parents to Chappie. We love to see his excitement when he learns to paint and to express himself but we feel dread as he is thrusted into the world of petty crime, being tricked and manipulated into becoming a “robot gangster.” There’s a sequence in the film that depicts such graphic violence done to Chappie that it borders on emotional manipulation.

It’s the year 2194 in Jung_E, and as expected, the Earth has become uninhabitable. Everyone lives in shelters now. Meanwhile, a team of scientists attempts to develop an AI version of Yun Jung-yi, a feared dead soldier who once helped in the fight against rebels who had broken off from the shelters and started their republic. The film is the brainchild of Yeon Sang-ho, best known for making one of the greatest zombie movies, Train to Busan.

A masterpiece of science fiction cinema, 2001: A Space Odyssey transcends traditional storytelling to present a mesmerizing visual and auditory experience that explores humanity’s place in the cosmos. Helmed by legendary director Stanley Kubrick and based on Arthur C. Clarke’s novel, the film interweaves themes of evolution, existentialism, technology, and artificial intelligence through its poignant narrative. Featuring an iconic score by Richard Strauss and groundbreaking visual effects that still hold up today, 2001: A Space Odyssey is widely regarded as one of the most significant films ever made.

Artificial intelligence ai

Finding a provably correct or optimal solution is intractable for many important problems. Soft computing is a set of techniques, including genetic algorithms, fuzzy logic and neural networks, that are tolerant of imprecision, uncertainty, partial truth and approximation. Soft computing was introduced in the late 1980s and most successful AI programs in the 21st century are examples of soft computing with neural networks.

Researchers in the 1960s and the 1970s were convinced that their methods would eventually succeed in creating a machine with general intelligence and considered this the goal of their field. In 1965 Herbert Simon predicted, “machines will be capable, within twenty years, of doing any work a man can do”. In 1967 Marvin Minsky agreed, writing that “within a generation … the problem of creating ‘artificial intelligence’ will substantially be solved”. They had, however, underestimated the difficulty of the problem. In 1974, both the U.S. and British governments cut off exploratory research in response to the criticism of Sir James Lighthill and ongoing pressure from the U.S. Congress to fund more productive projects. Minsky’s and Papert’s book Perceptrons was understood as proving that artificial neural networks would never be useful for solving real-world tasks, thus discrediting the approach altogether. The “AI winter”, a period when obtaining funding for AI projects was difficult, followed.

Perceiving is the process through which machines sense and interpret their surroundings. This involves the use of sensors, cameras, and other devices to gather data, which is then processed to create a meaningful representation of the environment.

In response, the Biden-Harris administration developed an AI Bill of Rights that lists data privacy as one of its core principles. Although this legislation doesn’t carry much legal weight, it reflects the growing push to prioritize data privacy and compel AI companies to be more transparent and cautious about how they compile training data.